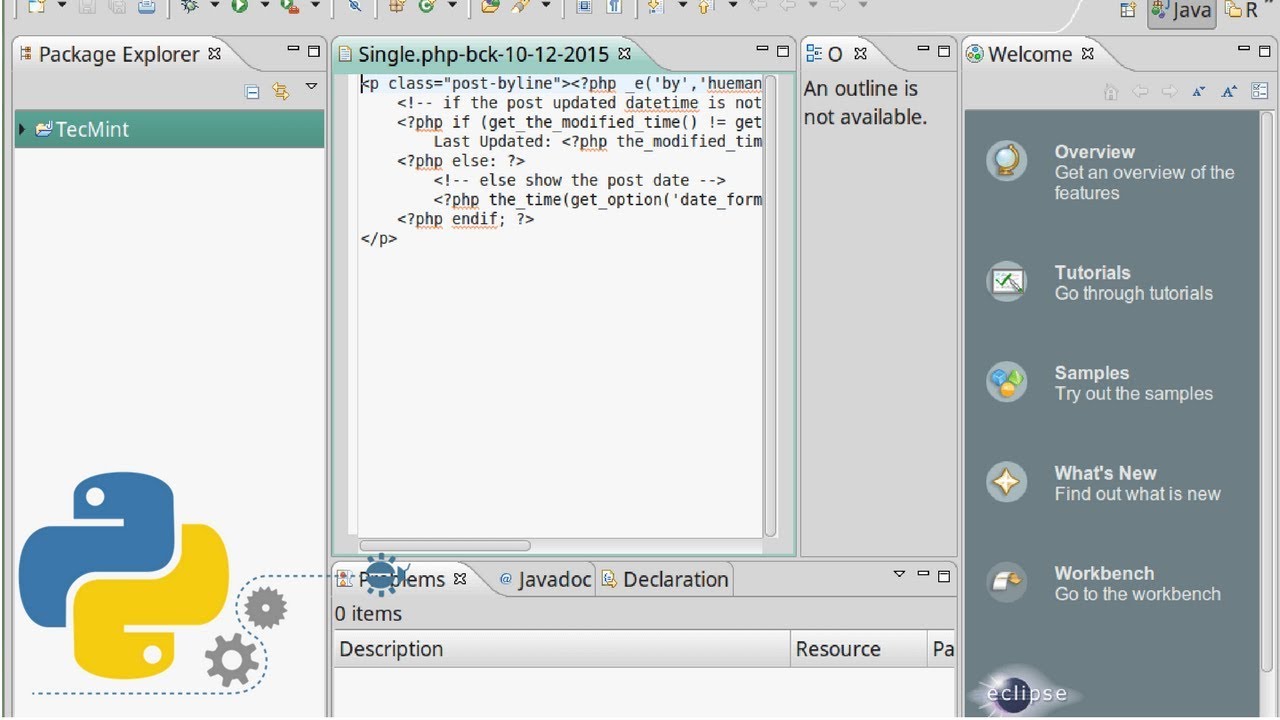

So with this finally cleared up, it’s time to go attack some feeds! I also found that I had to scour a ton of StackOverflow posts and other documentation just to get my head wrapped around this concept correctly.

PYTHON DOWNLOAD TEXT FILE FROM URL CODE

Pulling a feed down and saving it to a file is something Bob has done a thousand times so no longer has to give it any extra thought.įor me, however, this took an entire night* of playing around because I’d never done it before and was assuming (silly me!) that the parsing code I’ve been using requests for was all I needed. This is one of those things that we all just get used to doing.

PYTHON DOWNLOAD TEXT FILE FROM URL FULL

I’ve used full form names for the sake of this article thus the words file and response in my code. The line will generally read r = requests.get(URL). Note for beginners: If you’re reading other people’s code, be prepared to see with statements where files are opened as f. Using the content option allows you to dump the entire XML file (as is) into your own local XML file. Notice in the final write statement I’m using ntent? Have any idea how long I spent thinking my use of the usual response.text was the only way to do this? Too damn long! The binary mode is required to write the actual content of the XML page to your external file in the original format. This confused the hell out of me and resulted in me wasting time trying to convert the requests response data to different formats or writing to the external file one line at a time (which meant I lost formatting anyway!). TypeError: write() argument must be str, not bytes Your primary scraping or analysis script then references the local file. The nicer and Pythonic thing to do is to have a separate script that does the request once and saves the required data to a local file. This generates unnecessary traffic and load on that server which is a pretty crappy thing to do!

In the case of our code challenge (PCC17), how many times are you going to run your Py script while building the app to test if it works? Every time you run that script with your requests.get code in place, you’re making a call to the target web server. Good question! It’s about best practice and just being nice. Why Download when you can just Parse the feed itself? For those of you playing at home, this is for our PyBites Code Challenge 17 (hint hint!).

I’m talking XML here because I was/am trying to download the actual XML file for an RSS feed I wanted to parse offline. I wasn’t dealing with a text = r.text situation anymore, I was trying to maintain the original format of the page as well, tabs and all. You’re no longer just reading a text rendered version of the page, you’re trying to save the actual page in its original state. What if you wanted to actually save that web page to your local drive? Things get slightly different. Generally it’s for the purpose of parsing or scraping that page for specific data elements. Parsing is Different to Savingįor sure, experts and beginners alike will have used requests to pull down the contents of a web page. At least, it wasn’t as straight forward as that for a beginner like me. Really? An article on downloading and saving an XML file? “Just use requests mate!”, I hear you all saying.